Innovation and Technology

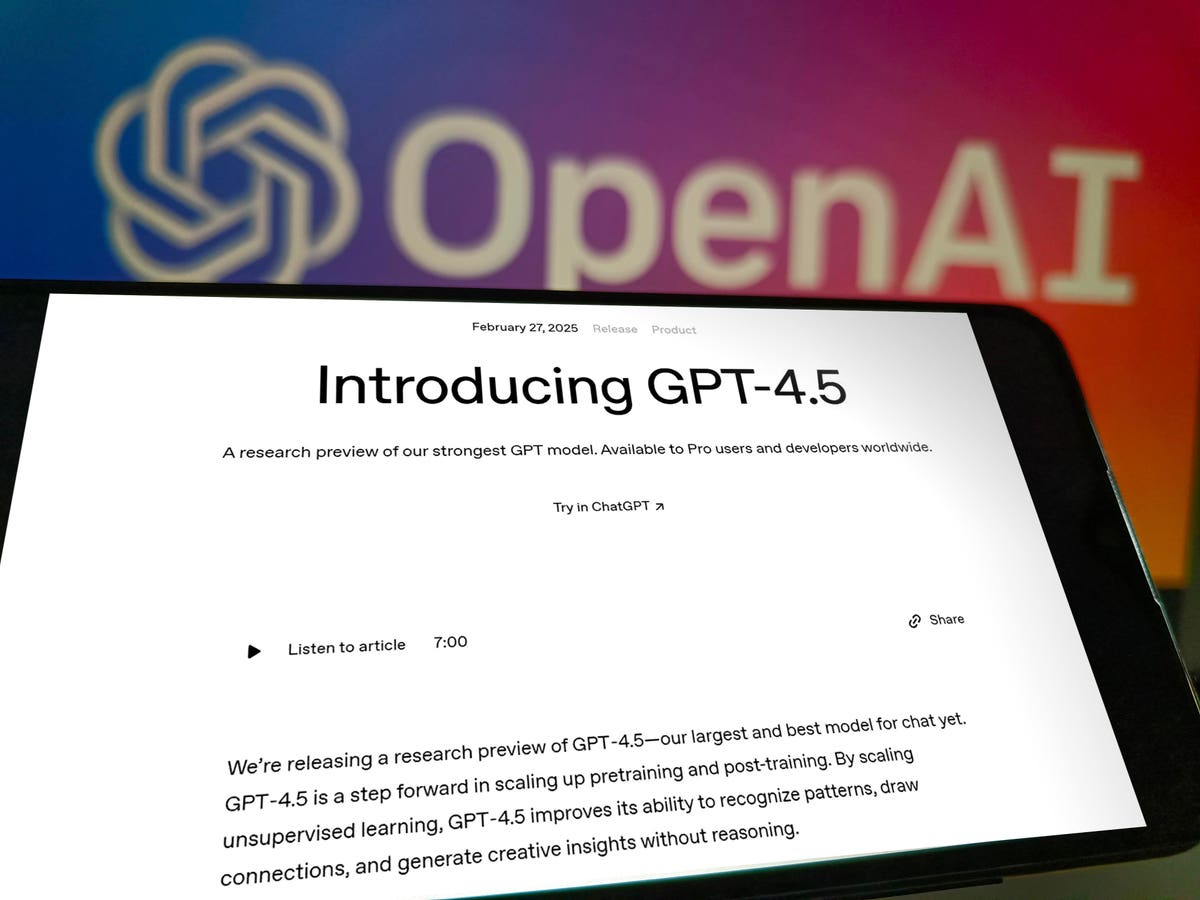

Open AI’s GPT-4.5 Drops

From Heavy to Lean AI: A Parallel to Computing History

The Rise of Reasoning Models and Smarter Fine-Tuning

A fresh wave of large language models (LLMs) are battling for attention, but can they become smarter, faster, and cheaper at the same time? The emergence of DeepSeek R1 signals that the future of AI might not belong to the largest or most data-hungry models – but to those that master data efficiency by innovating machine learning methods.

New Models Offer Budget Flexibility

This shift toward efficiency echoes the evolution of computing itself. In the 1940s and ’50s, room-sized mainframe computers relied on thousands of vacuum tubes, resisters, capacitors, and more. They consume an enormous amount of energy and only a few countries could afford it. As computing technology advanced, microchips and CPUs ushered in the personal computing revolution, dramatically reducing size and cost while boosting performance.

The Future of AI

Today’s state-of-the-art LLMs, capable of generating text, writing codes, and analyzing data, rely on colossal infrastructure for training, storage, and inference. These processes demand not only vast computational resources but also staggering amounts of energy.

The Transition to Lean AI

The transition from centralized, data-hungry behemoths to nimble, personalized, and hyper-efficient models is already underway. The key lies not in endlessly expanding datasets but in learning how to learn better – maximizing insights from minimal data.

Jiayi Pan and Fei-Fei Li’s Research

Researchers such as Jiayi Pan at Berkeley and Fei-Fei Li at Stanford have already demonstrated this in action. Jiayi Pan replicated DeepSeek R1 for just $30 using reinforced learning. Fei-Fei Li proposed test-time fine-tuning techniques to replicate DeepSeek R1’s core capabilities for only $50.

Open-Source AI Development

Another crucial enabler of this shift is open-source AI development. By opening up the underlying models and techniques, the field can crowdsource innovation – inviting smaller research labs, startups, and even independent developers to experiment with more efficient training methods.

Conclusion

The future of AI is not just about the size of the models, but about how efficiently they can learn and process information. As the LLM arms race intensifies, the companies and research teams that crack the code of efficient intelligence will not only cut costs but unlock new possibilities for personalized AI, edge computing, and global accessibility.

FAQs

Q: What is the significance of DeepSeek R1’s emergence?

A: DeepSeek R1 signals that the future of AI might not belong to the largest or most data-hungry models – but to those that master data efficiency by innovating machine learning methods.

Q: How do LLMs currently work?

A: LLMs rely on colossal infrastructure for training, storage, and inference, demanding vast computational resources and energy.

Q: What is the potential impact of the transition to lean AI?

A: The transition to lean AI could reduce AI’s reliance on giant data centers, leading to a more sustainable and environmentally friendly AI development. It could also accelerate innovation in embodied intelligence and robotics, where onboard processing power and real-time reasoning are critical.

-

Resiliency7 months ago

Resiliency7 months agoHow Emotional Intelligence Can Help You Manage Stress and Build Resilience

-

Career Advice1 year ago

Career Advice1 year agoInterview with Dr. Kristy K. Taylor, WORxK Global News Magazine Founder

-

Diversity and Inclusion (DEIA)1 year ago

Diversity and Inclusion (DEIA)1 year agoSarah Herrlinger Talks AirPods Pro Hearing Aid

-

Career Advice1 year ago

Career Advice1 year agoNetWork Your Way to Success: Top Tips for Maximizing Your Professional Network

-

Changemaker Interviews1 year ago

Changemaker Interviews1 year agoUnlocking Human Potential: Kim Groshek’s Journey to Transforming Leadership and Stress Resilience

-

Diversity and Inclusion (DEIA)1 year ago

Diversity and Inclusion (DEIA)1 year agoThe Power of Belonging: Why Feeling Accepted Matters in the Workplace

-

Global Trends and Politics1 year ago

Global Trends and Politics1 year agoHealth-care stocks fall after Warren PBM bill, Brian Thompson shooting

-

Changemaker Interviews12 months ago

Changemaker Interviews12 months agoGlenda Benevides: Creating Global Impact Through Music